AI for Time Series: Model Types (Part 2)

from the series: "The Evolution of Artificial Intelligence for Sequential Data"

After exploring model types in the first installment, where we examined how (S)ARIMA models make forecasts using autocorrelation, we will now turn our attention to neural networks.

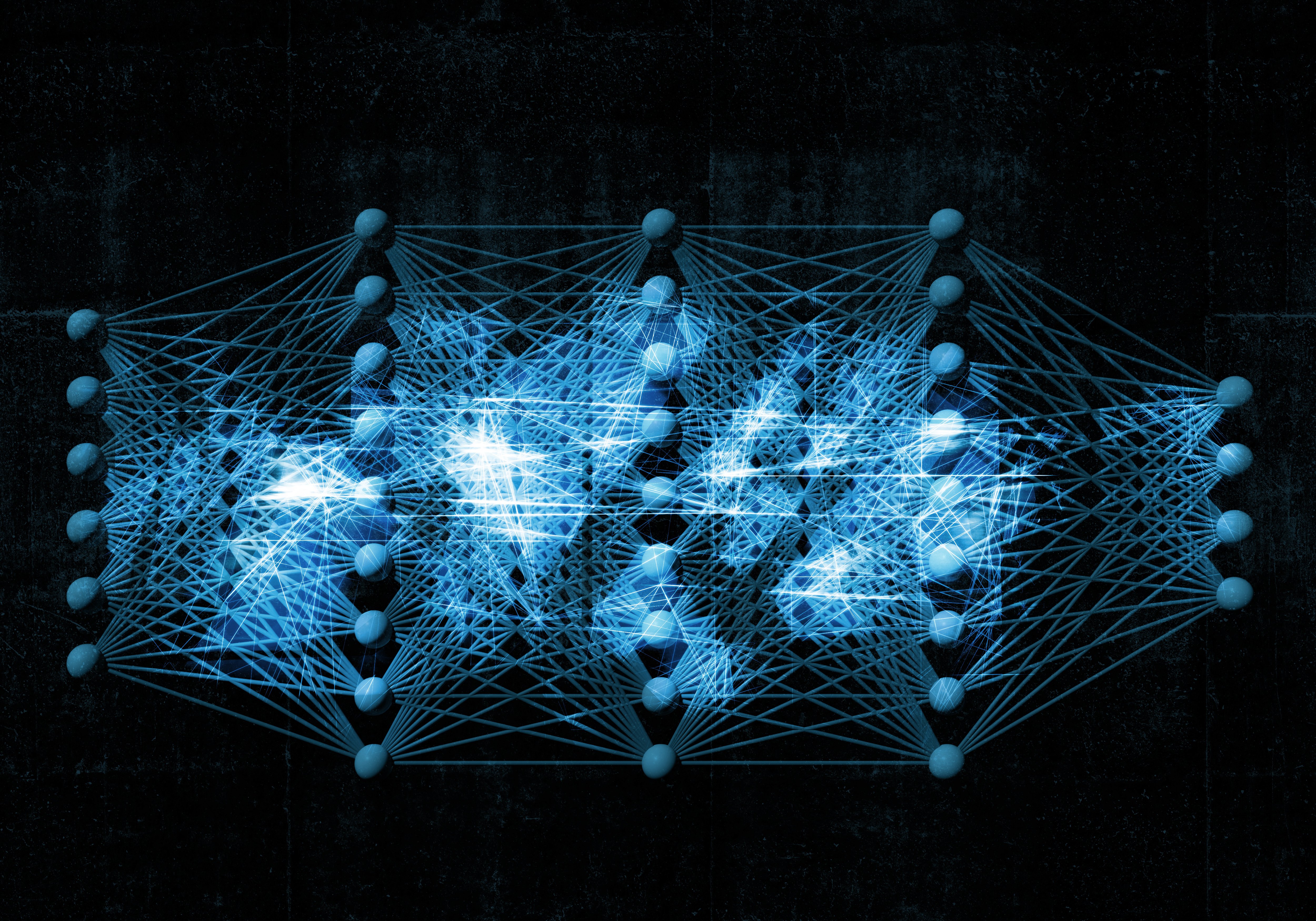

Deep Learning with Neural Networks

In AI models in the form of (artificial) neural networks, the calculation of forecasts does not rely on selected statistical features. While recurrent neural networks also use past values for forecasting, information from data further back in the past is only transmitted indirectly to the next neuron through an internal memory state, the hidden state.

How the next data point affects the value in memory is the same for all time steps and is controlled—like much of neural network behavior—by weights. Other weights determine how the output is generated from the memory, for example, the forecast of the next data point. The network weights are also the result of model training. The goal is to find weights that best capture the relevant information in the data to make accurate forecasts.

Challenge 1: Long-Term Effects

Because recurrent neural networks are decomposed into a long chain of multiple neurons when processing time series, they belong to deep learning methods. This structure makes them versatile in application, but despite the memory, they are not well-suited for long sequences. The more time steps the network processes, the longer the chain of calculations becomes, and the more difficult it is during training to find the correct weights to capture the influence of data far in the past on future measurements.

To model long-term dependencies, as they frequently occur in time series, recurrent neural networks have been augmented with Long Short-Term Memory. This memory can selectively read from input data, retain stored information, or forget parts of it, which effectively shortens the calculation chains.

Challenge 2: Interpretability

In relation to the example time series, a recurrent neural network would ideally learn to distinguish between large cyclical fluctuations and random deviation of successive data points—the "noise." Compared to an (S)ARIMA model, it has more options to describe the influence of past values, allowing it to better capture complex patterns. However, due to the nested structure of a neural network, its weights cannot be interpreted as explicitly as those of an (S)ARIMA model.

It often remains unclear how specific data characteristics contribute to the forecast and in what way, which is why many deep learning models are viewed as black boxes whose inner workings remain hidden. Improving interpretability is a goal of current scientific research and is pursued under the term Explainable AI (xAI).

In summary:

- Recurrent neural networks are deep learning models that can recognize relationships in sequential data and make forecasts based on past values.

- Training recurrent neural networks becomes more difficult the more time steps are processed. Long-term dependencies typically cannot be captured well.

- Extensions such as Long Short-Term Memory enable more effective use of relevant information and improve the consideration of long-term effects.

- Deep learning models are often black boxes in the sense that their weights do not make it easy to understand how a forecast comes about. Therefore, it is important to statistically capture the reliability of forecasts and continuously monitor them.