The AI Pyramid as a Blueprint for Intelligent Machines and Processes

Now that it is clear what artificial intelligence (AI) is capable of, many companies are faced with the challenge of quickly determining whether AI can also create added value in combination with their machines and processes. If so, it is important to put the new AI functions to productive use as quickly as possible or to offer them in a market-ready form.

In this article, you will find a blueprint for upgrading machines and technical processes with AI and operating them reliably under industrial conditions.

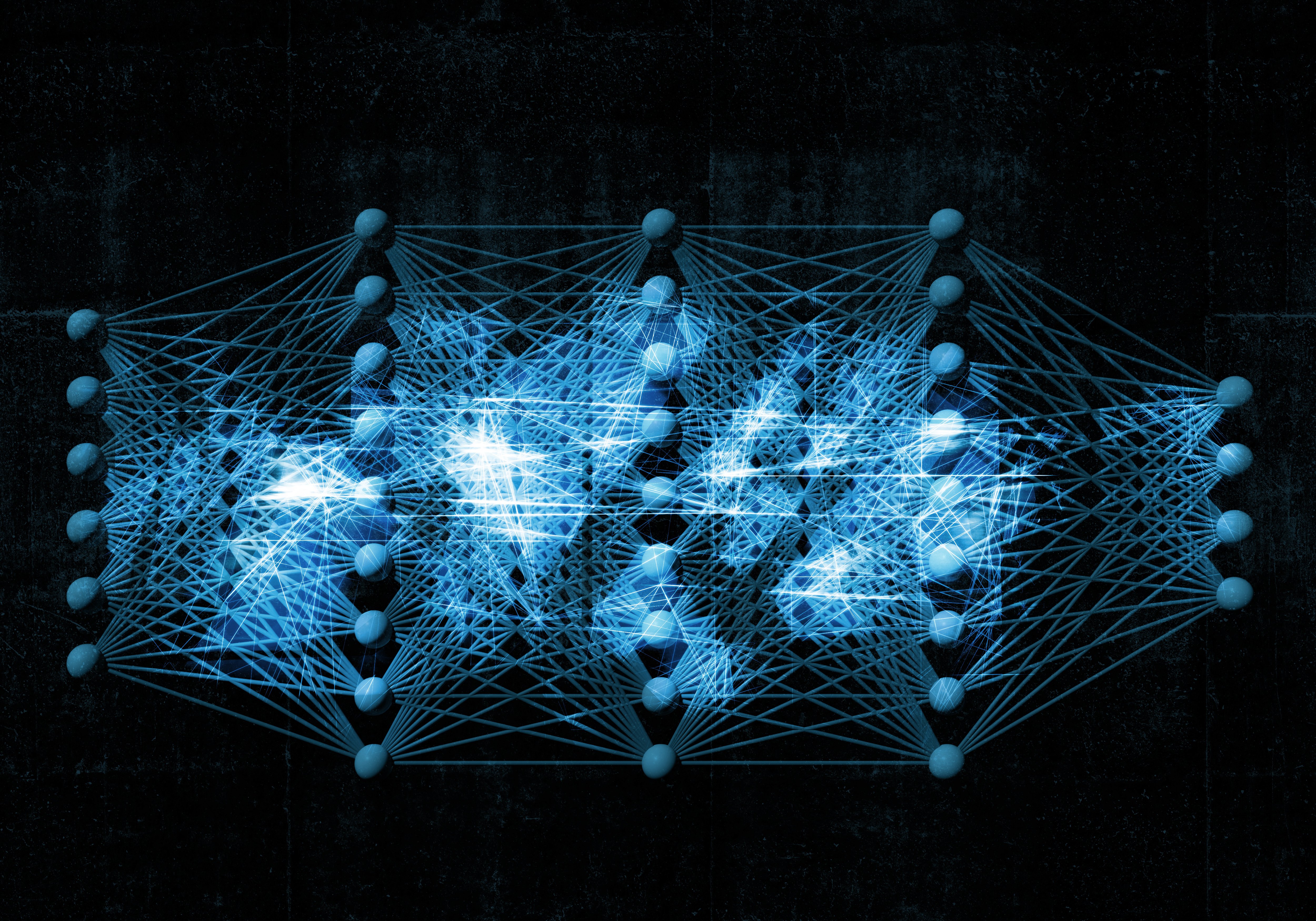

The AI pyramid, a blueprint for all cases

Whether artificial intelligence is used for predictive quality assurance in production or to optimize energy consumption in industrial plants, the necessary AI components can be built and operated according to the same scheme. We illustrate this blueprint as an AI pyramid, as the necessary steps build on each other and together lead to an overarching goal: the reliable productive use of AI.

Stage 1: Data engineering

Based on data from machines, sensorized equipment, and processes, data engineering ensures that this data is converted into a form suitable for AI. The main tasks involved in generating suitable AI training data from raw data are:

- AI data model: The data schemas and data interfaces defined in the data model determine how data is collected and retrieved for AI purposes. In particular, data storage, the relationships between different data units, and data access must be defined.

- Data preprocessing: With the aim of converting raw data into a format suitable for AI algorithms, data is cleaned (e.g., in the case of missing values), recoded (e.g., non-numerical data), and, if necessary, reduced in dimensionality (compression).

- Labeling: Supervised learning models, such as those used to classify error states, require labels. In the labeling process, data is assigned the correct labels/categories so that the model can learn from these examples.

The result of data engineering in stage 1 is data that is clean, structured, and, if necessary, labeled so that it can be used for training AI models.

Stage 2: AI engineering

In AI engineering, task-specific AI models are trained, evaluated, and fine-tuned using the training data generated in stage 1 until the AI results are accurate enough. The main tasks for creating high-quality AI models are:

- Training with an AI Model Factory: Nowadays, individual AI models are no longer trained, but rather a whole set of potentially suitable models are trained simultaneously. Automation tools are used for the rapid development, testing, and deployment of AI models to ensure scalability and efficiency in AI development processes. Retraining AI models during operation is practically impossible without an automated AI Model Factory.

- Feature engineering: Data from complex industrial processes in particular often lacks the necessary interpretability to easily achieve high-quality AI results. Domain-specific feature engineering can significantly improve an AI model's ability to learn from such data.

- Evaluation, fine-tuning, and selection of AI models: The quality of the results of trained AI models can be evaluated using various criteria or metrics. The preferred metric (e.g., precision or recall) determines how the AI will later behave in specific situations, e.g., whether it is better to err on the side of caution and issue too many warnings rather than too few. With appropriate fine-tuning, i.e., targeted experimentation with different algorithms, features, and hyperparameters, the selected metric is optimized. The AI model with the best evaluation is selected for productive use.

The result of AI engineering in stage 2 is a trained AI model that performs a specific task under specified quality standards.

Stage 3: AI Operations

For the practical application of the AI model developed in stage 2, the focus shifts to its deployment, management, and maintenance in the production environment. The following points must be ensured, particularly in industrial applications:

- Operation as a real-time AI service: The trained AI model is embedded in the production environment as an AI service and can be accessed or executed at any time. Machine and process data are processed by the AI service in real time to generate diagnoses, predictions, or suggestions.

- Management of AI models: AI models have a limited life cycle. During this cycle, they must be versioned, updated, and eventually replaced or decommissioned. This ensures that AI models remain effective and relevant over time.

- Continuous quality monitoring: Especially in industrial applications, it must be ensured that AI models function at all times as they did when they were accepted in stage 2. This includes monitoring the quality of AI results (model drift) and the timely detection of changes in the input data (data drift). Any deterioration must be responded to immediately.

- Automatic AI retraining: Automatic retraining mechanisms are set up to counteract problems such as model drift and data drift, but also to improve AI models with a constantly growing database. This ensures that models are regularly updated to maintain or improve their performance without manual intervention.

The result of a professionally set up AI operations framework is AI models that are always up to date and reliably perform their tasks on a daily basis under industrial conditions.

Isn't there something missing?

Yes, there is actually one more step between the AI operations framework and daily AI use: Before the new AI function or AI device can be used in productive operation, technical and procedural integration into the existing system landscape is usually required. However, if the AI operations framework is packaged as a modular software component—as aiXbrain offers with its AI operating system Dataray—this step is the same as for the integration of conventional software and automation solutions and thus becomes a standard task.

Good to know

The professional implementation of all tasks in the AI pyramid requires a mix of expertise that is not clear to many companies that do not operate AI as their core business: While data engineering and feature engineering require knowledge of data science and engineering in particular, the development of high-quality AI models and their operation in the AI Operations Framework require in-depth knowledge of machine learning engineering and machine learning operations (MLOps). Furthermore, without the appropriate expertise in computer science or software engineering, the developed AI cannot be packaged, integrated, and maintained as a scalable software module. And since it can happen repeatedly in a typical AI life cycle that individual parts or the AI pyramid as a whole have to be processed, the necessary mix of expertise and resources must be available on a permanent basis.

The implementation of a technically viable AI use case into a profitable AI offering can therefore quickly spiral out of control in terms of costs and destroy the associated business case. To prevent this from happening, aiXbrain offers all companies the opportunity to upgrade their machines and processes with AI in an economically viable and sustainable manner with its targeted services (data engineering, AI engineering, integration) and its AI operations software (Dataray).